Architecture

Shared storage based on local devices: NVMe, SAS SSD, virtual disks

Storage devices attached to compute nodes (hyper-converged) or in separate storage nodes

Standard x86 servers or VMs used as compute and storage nodes

Fully distributed architecture with no single point of failure

Mirroring of data across nodes, data centers, or cloud availability zones

Network connectivity using commodity Ethernet

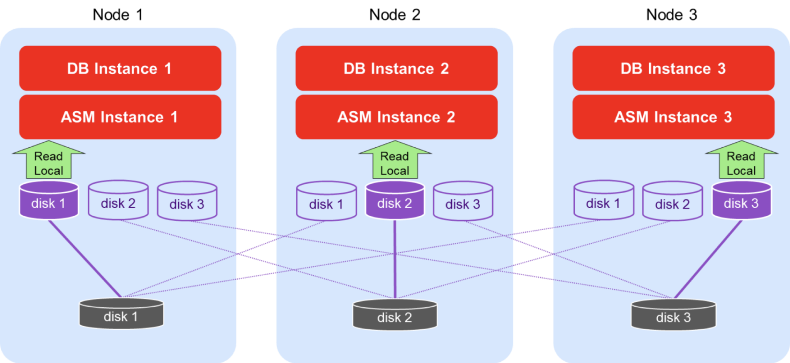

FlashGrid Read-Local Technology minimizes network overhead by serving reads from local storage devices

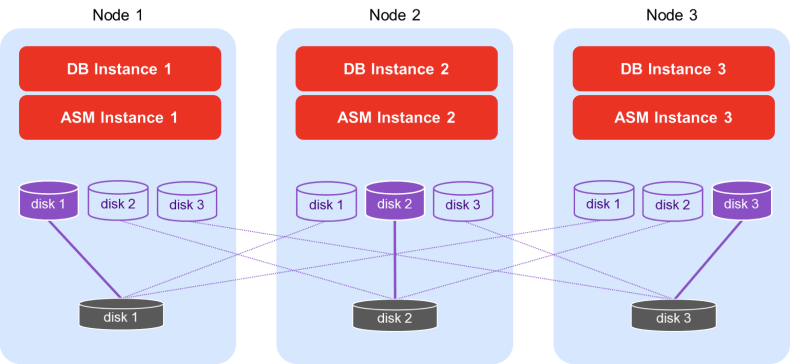

Shared storage access

FlashGrid Storage Fabric makes every storage device accessible from every database node in the cluster.

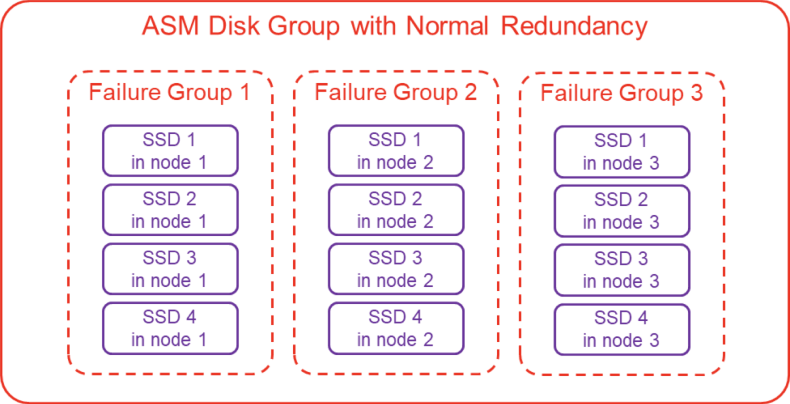

High availability and data mirroring

FlashGrid has a fully distributed architecture with no single point of failure. FlashGrid leverages Oracle ASM’s existing capabilities for mirroring data. In Normal Redundancy mode each block of data has two mirrored copies. In High Redundancy mode each block of data has three mirrored copies. Each ASM disk group is divided into failure groups – one failure group per node. Each disk is configured to be a part of a failure group that corresponds to the node where the disk is physically located. ASM makes sure that mirrored copies of a block are placed on different failure groups. In Normal Redundancy mode the cluster can withstand loss of one (converged or storage) node without interruption of service. In High Redundancy mode the cluster can withstand loss of two (converged or storage) nodes without interruption of service.

FlashGrid Read-Local™ Technology

In converged clusters the read traffic can be served from local SSDs at the speed of the PCIe bus instead of travelling over the network. In 2-node clusters with 2-way mirroring or 3-node clusters with 3-way mirroring 100% of the read traffic is served locally because each node has a full copy of all data. Because of the reduced network traffic the write operations are faster too. As a result, even 10 GbE network fabric can be sufficient for achieving outstanding performance in such clusters for both data warehouse and OLTP workloads. For example, a 3-node cluster with four NVMe SSDs per node can provide 30 GB/s of read bandwidth, even on a 10 GbE network.

Designed for Oracle clusters

Integrates seamlessly with Oracle Clusterware and ASM

Easy to use for Oracle DBAs

Technical support with deep Oracle expertise.