’Extra-large Oracle databases on AWS’ article featured in RMOUG newsletter

May 5, 2020

Rocky Mountain Oracle Users Group has released the next issue of their RMOUG Update newsletter, featuring an article by Art Danielov, CEO and CTO FlashGrid. Titled “The Sky Is the Limit: Extra-Large Oracle Databases on AWS”, the article proposes practical architectures that make it possible to run gigantic Oracle databases of 100 TB or more on AWS EC2 infrastructure.

Here is an excerpt from the article:

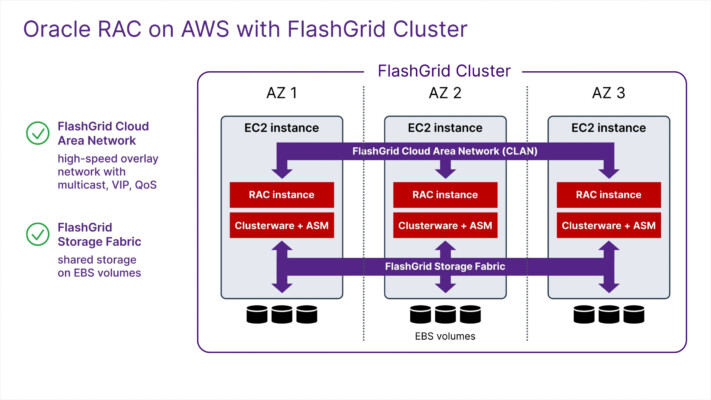

At first glance, it would appear that extra-large Oracle databases are easy enough to create in AWS because a single EBS volume can be as large as 16 TiB, and because multiple EBS volumes can be attached to an EC2 instance. That works, but only if you don’t also require high network throughput. Remember that EBS is network-attached storage. AWS typically throttles EBS throughput based on the storage type (gp2, io1, etc.) and size of the EBS volume. Further, AWS also throttles throughput depending on the EC2 instance type. If multiple high-throughput EBS volumes are attached to an instance with limited bandwidth, the drives will never reach their maximum capacity. As an example, an r5n.24xlarge instance has a maximum throughput of 2,375 MB/s1. Regardless of the number or size of the EBS volumes you attach to it, the instance isn’t capable of providing higher throughput than that.Then there is the matter of high availability for which Oracle RAC is the usual solution. However, Oracle RAC has the following infrastructure requirements that are not directly available in AWS:

- Shared high-performance storage accessible from all nodes in the cluster;

- A multicast-enabled network between all nodes in the cluster; and

- Separate networks for different types of traffic: client, cluster interconnect, and storage.

Subscribe to our updates